Last Updated on March 2, 2026

The Free-Tier API is a Free Lunch—If You Use It Right

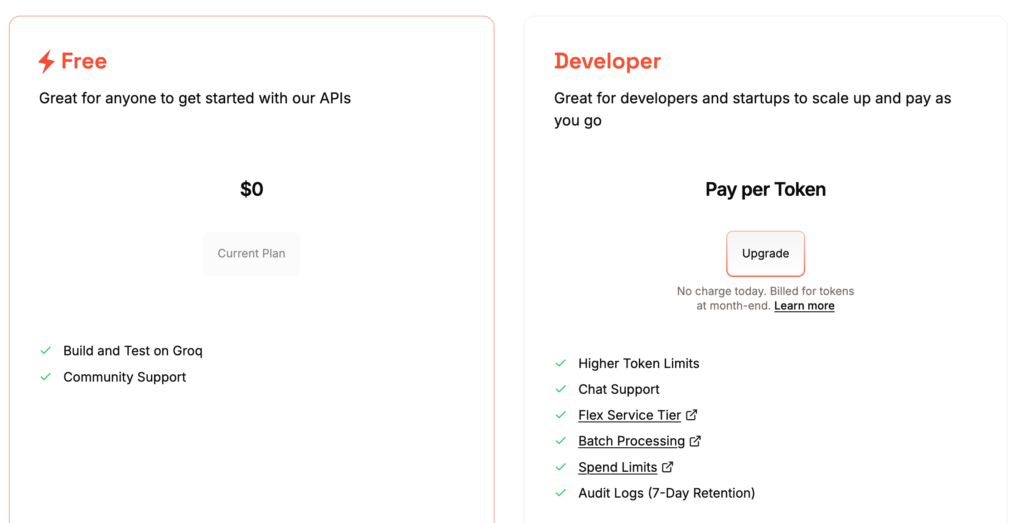

In today’s aggressive AI market, industry leaders like Groq, OpenAI, Anthropic, Mistral, and Google are battling for dominance by offering free-tier APIs—a legitimate “free lunch” for the strategic user. By adopting a hybrid strategy that offloads data security to your local machine, you can exploit these world-class cloud resources without paying the hidden “privacy tax.” Using an LLM proxy paired with local redaction, you can funnel the full power of the Groq API directly into Microsoft Word, creating a high-performance, zero-cost workflow that remains strictly under your control. It is noted that Groq is highlighted in the “Best Free AI APIs: Build with LLMs Without Spending a Penny.“

📖 Part of the Hybrid AI Strategy Guide: This post is a deep-dive cluster page within our Hybrid AI Integration series—your definitive roadmap for bridging high-performance cloud intelligence with total local data control.

The Groq Advantage: Privacy by Default

In the current landscape of AI providers, opting for Groq—alongside other performance-focused infrastructure players—is often the superior choice for those wary of data mining. While many consumer-facing chatbots treat your conversations as training material, Groq’s primary differentiator is its Zero Data Retention (ZDR) policy. Their technical documentation explicitly states that data processed through their API is not used to train or improve their models. This ensures your private prompts are never used to train future AI, preventing your sensitive data from accidentally leaking into the public domain. It creates a secure environment that both individual developers and large companies can trust.

While these “no-log” guarantees offer a high level of security, the most risk-averse users—particularly those in highly regulated industries—often seek an extra layer of protection. Relying on a provider’s policy is a strong start, but since data still travels to a third-party server, achieving true “zero-trust” compliance requires a physical barrier. For those handling sensitive PII or proprietary trade secrets, implementing a local measure ensures that the most critical information never leaves your hardware in the first place.

Maximizing the Groq “Free Lunch” through GPTLocalhost

With GPTLocalhost, a local Word Add-in, you can fully capitalize on the high-speed, free-tier resources offered by providers like Groq. By serving as a smart intermediary, GPTLocalhost allows you to “eat the free lunch” of world-class cloud inference without the typical overhead or complex setup. You get the blistering performance of Groq’s LPU technology directly within your existing workflow.

Double Safety: Compliance Beyond the Cloud

While Groq’s Zero Data Retention policy is industry-leading, GPTLocalhost adds a secondary layer of defense through local redaction. This “double safety” measurement ensures that even before a prompt is transmitted, sensitive PII or proprietary trade secrets are scrubbed on your own hardware. The hybrid strategy utilizes a three-step redaction workflow:

- Redact Locally: Before your draft leaves your machine, sensitive names, and proprietary details are replaced with placeholders (e.g.,

[Person_A]or[Place_X]) within your local environment. - Process via LLM Proxy: The sanitized text is sent to Groq through an LLM proxy, where you configure your own API key with your preferred LLM provider. GPTLocalhost connects only to the proxy, not directly to the model. As a result, GPTLocalhost does not know whether the LLM is running locally or in the cloud, and your API key is never exposed to the add-in. This setup lets you switch seamlessly between different cloud providers or local models without modifying your core Word configuration.

- Restore Locally: After the cloud AI returns the refined response, you can trigger the “unredact” prompt to restore your original sensitive data directly within Microsoft Word. This ensures the final text is completed locally, without the cloud service ever accessing the true, unredacted version of your content.

Key Comparison: Copilot vs. GPTLocalhost Hybrid

Choosing between native integration and a hybrid strategy is a trade-off between convenience and control. While Microsoft Copilot offers a “one-click” experience, it locks you into a paid subscription and limited ecosystem. In contrast, the GPTLocalhost Hybrid approach treats AI as an interchangeable utility; by using an LLM proxy, you can leverage the Groq API for Word at zero cost with a “privacy-first” posture no cloud-only provider can match.

| Feature | Microsoft Copilot | GPTLocalhost Hybrid |

| Monthly Cost | Paid Subscription | $0 (Free Tier) |

| Llama 3.3 70B Integration | ❌ No | ✅ Yes |

| Claude Integration | ✅ Yes | ✅ Yes |

| ChatGPT Integration | ✅ Yes | ✅ Yes |

| Integration with More LLMs | ❌ No | ✅ Yes |

| Privacy Control | Cloud-only processing | Local Redaction + Proxy |

Hybrid in Action: The Best of Both Worlds

The Hybrid AI Strategy optimizes your workflow by treating cloud and local models as interchangeable utilities routed based on privacy, cost, and complexity. By using an LLM proxy as a central controller, you turn Microsoft Word into a powerhouse no longer limited by a single provider’s subscription or data policy, providing you with three key advantages:

- Zero-Cost Power: Leverage the “free lunch” of the Groq API for complex reasoning and long-context analysis without the subscription fee.

- Total Data Ownership: By redacting data locally before it hits the proxy, you use the cloud as a “blind” processing engine. The cloud handles the logic, but your sensitive secrets never leave your hardware.

- Future-Proof Flexibility: Unlike the rigid walls of Copilot, you can swap cloud and local models easily, ensuring you always have the best tool for the specific task at hand.

Take full control of your hybrid AI integration today. Start building a secure, professional-grade drafting environment—no subscriptions, no data leaks, and no compromises.

For Intranet and Teamwork: Explore LocPilot for Word to bring private, local AI to your entire organization. Learn More 👉 or Watch A Quick Demo 👀